Software plugins are often forecasters of future fatigue – as off the shelf solutions, the ease at which they can produce satisfying results – can simultaneously dictate how quickly their techniques are likely to become widespread and our eyeballs immune to them. Zoetroepe Software‘s recent batch of plugins are attempts at ‘generative design instruments (for Final Cut Pro X, Adobe Premiere, Adobe After Effects & Apple Motion) which encourage and reward experimentation – an unsurprising approach, given they spring from the creator of VDMX (real-time video software), Johnny De Kam. (see interview below the reviews)

“My underlying design philosophy is akin to synthesizers in music, that is, visual instruments with oscillators and various visual ‘forms’ as source compositions. I tend to design these instruments to be open, allowing you the greatest flexibility to influence the output, a good dose of ‘meta’ design that can get you results quickly, and finally I strive to build systems with unique aleatoric progression, randomness and style capable of producing unexpected results… I take great joy in crafting shape-specific UV texture maps that preserve the best aspect ratio for maximum video impact.”

– Johnny De Kam

Overall, the Zoetroepe collection of plugins focus on colour controls, pattern generation and geometric transformations – but it’s the way they’ve been built, which distinguishes from a lot of more directly functional plugins out there. Let’s start with the juiciest:

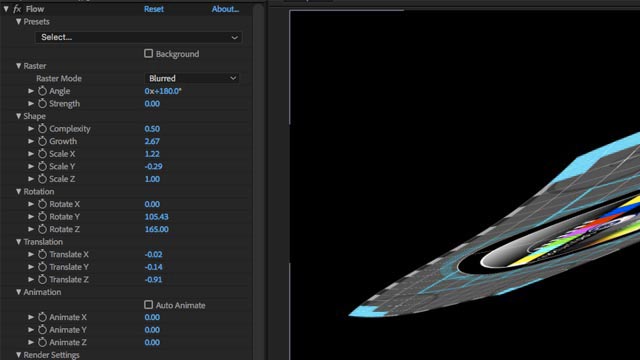

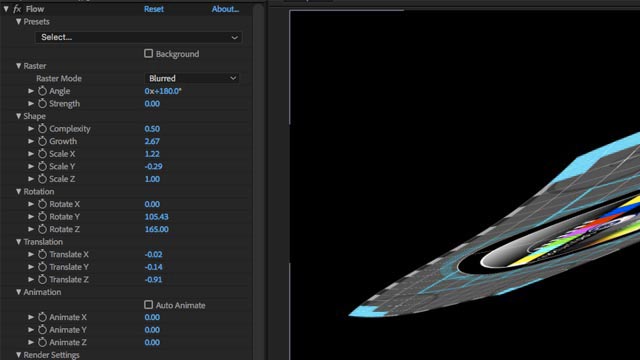

FLOW : Organic Generative Design

“An organic, generative design plugin … which lets you explore and iterate simple or complex curving and flowing forms in 3D space, using any video source in your timeline.”

This was one of my favourite Zoetroepe plugins to use – some pretty wild shape distortions are possible, and the automated mode is fun to use, letting some of the oscillators define and keep animating some behaviours, while other parameters can be tuned to fit or juxtapose with those. An adjustible streaked motion blur option is a nice touch too, for muting video textures sometimes.

– lacks continuous rotation with some parameters, eg being able to keyframe multiple lots of 360 degrees, rather than 0-360…

– could use some presets as interesting starting points

Capable of some organic and distinctive results, with plenty of surprises rewarding exploration.

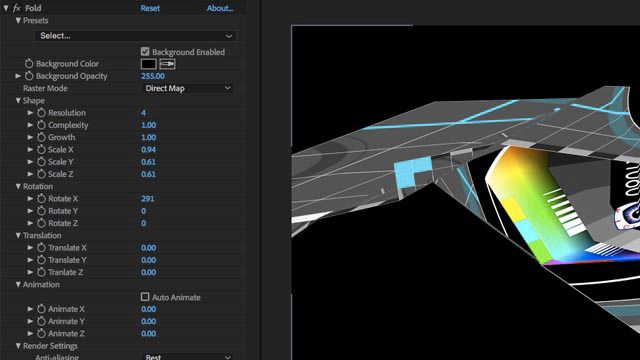

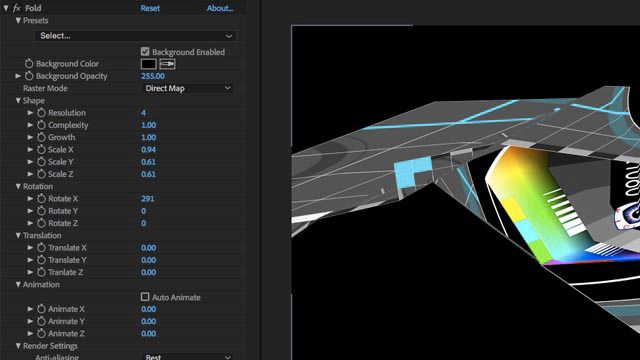

FOLD: Folding Generative Design

“A perpetually folding generative design instrument, manifested as a video effect plugin. With FOLD, you can explore and iterate simple or complex geometric forms in 3D space, using any video source in your timeline.”

Again, there’s a pleasure with exploring this tool – happy accidents and unexpectedly pleasing shapes pop-up regularly, and it’s an origami flavoured fun to morph between keyframed shapes. Super easy to generate unusual 3D animated shapes, for texturing with any desired video.

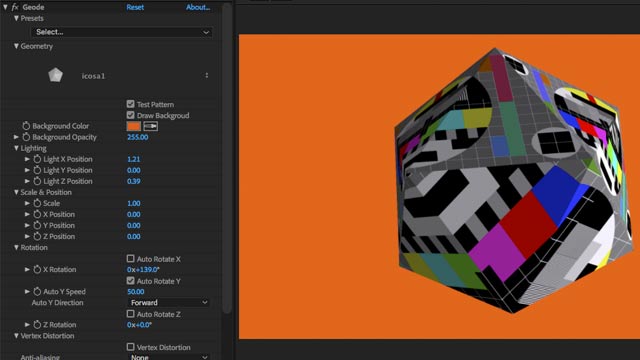

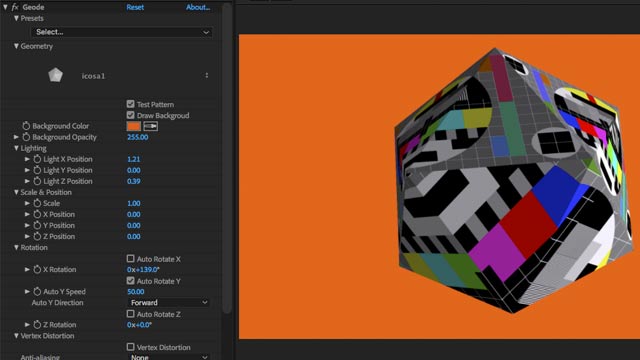

GEODE: 3D Mapping video

“A 3D video mapping & geometric alpha transition engine, based on meticulously crafted 3D mesh models – you can easily map a video source and animate it in space.”

There’s a lot of fun to be had – adding video textures to objects as easily as this. With the technical details resolved, it’s straight into compositing and animating movement, scale and rotation over time. Duplicate layers to create shadows, composite against colours, textures, backgrounds (muted? coloured? blurred?), scale large for abstraction – everything happens fast with OpenGL acceleration. Enabling the vertex distortion algorithm – animates individual vertex points in the model, offering up even more contortions on your video.

- could use mirror (and other modes) for tiling..

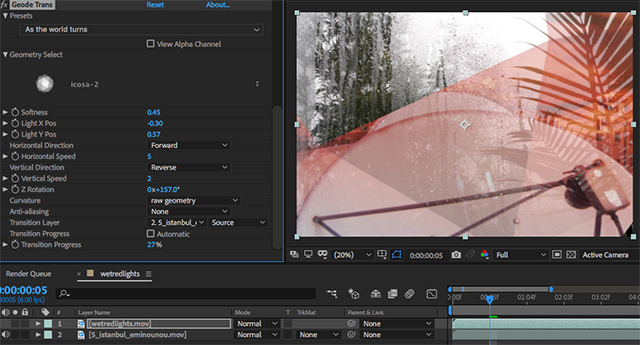

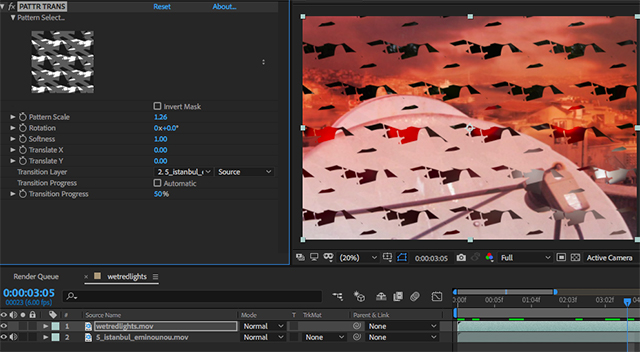

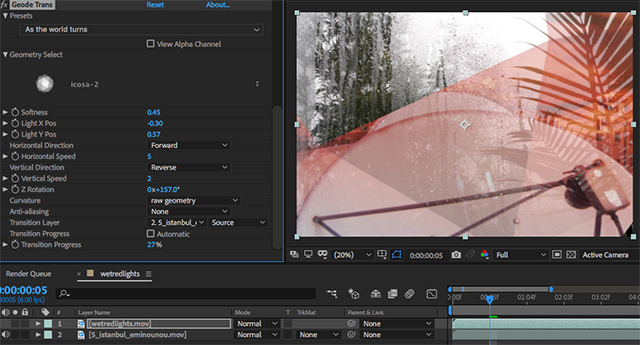

GEODE TRANS

Using this same 3D model-based approach, playful transitions are possible by using the alpha channel to define the transparency between A and B video over time. Load one of thirteen models, then define / animate the light source position, and adjust softness to vary the transition from hard edged wipe to gentle fade. In the screenshot above, a geometric transition is revealing the orange cityscape against the wet window clip. The combinations possible through object rotation, changing the light source, and softening the object edges really help tune the dynamics of the transition to the clips.

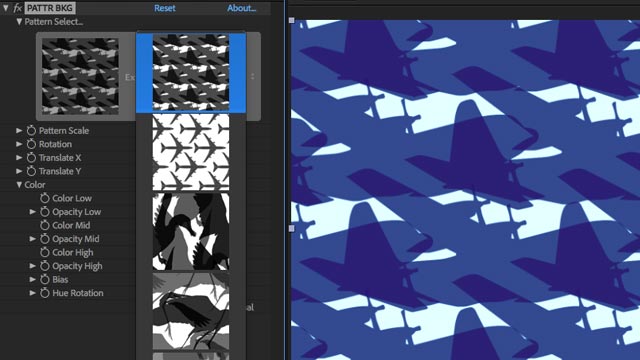

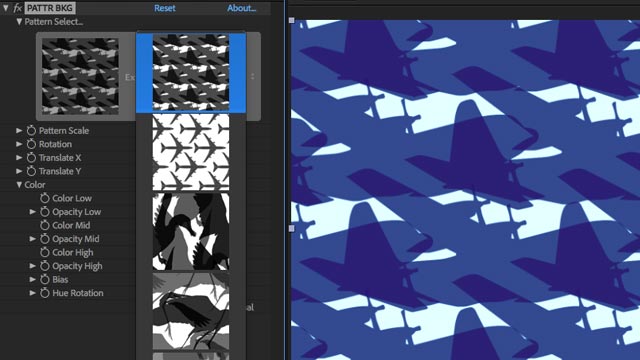

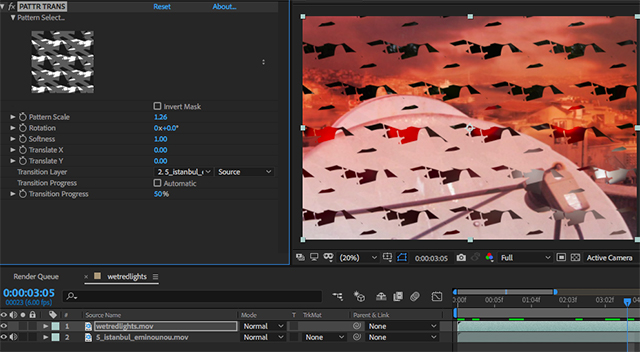

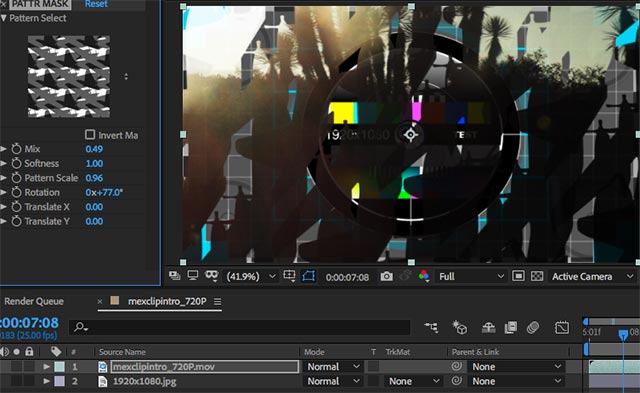

PATTR: Seamless Patterns Suite

“Our exclusive collection of 120+ patterns are tightly integrated within a trio of plugins designed for different parts of your workflow: PATTR BKG is a pattern background generator, PATTR TRANS is a transition engine, and PATTR MASK is a pattern mask composite effect. Within each plugin you can, seamlessly scale, rotate, translate and animate each pattern”

These work nicely – scale, rotation and movement options facilitate a surprising amount of difference with each seamless pattern, and all of the patterns are stored in greyscale, which enables an in-built tri-tone colour mixer to mix, or hue-morph colours over time.

- Being able to use user defined textures to auto-generate the seamless patterns would be nice

PATTR TRANS – Use seamless patterns as alpha channel transition between clips

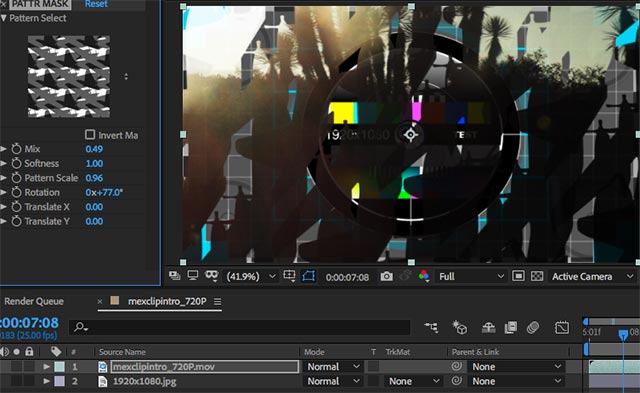

PATTR MASK – Use seamless patterns as alpha mask between clips

Above – using PATTR MASK shapes to composite Mexican landscape video above a test pattern.

MULTIVIEW: Split Screen Effects Engine

“A collection of nine multi-channel video generators, allowing you to display up to nine synchronized videos at once, with or without borders.”

As described – with border control size + colour options, dropzones for FCP X, and synchronised multi-cam – offering easy split-screen compositions.

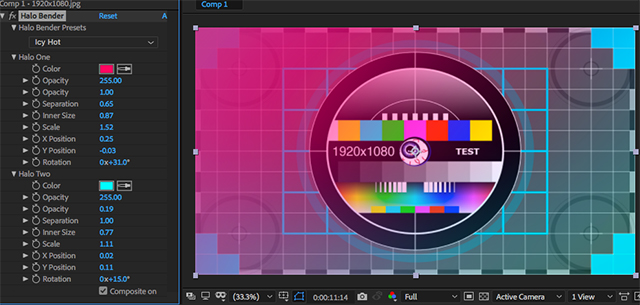

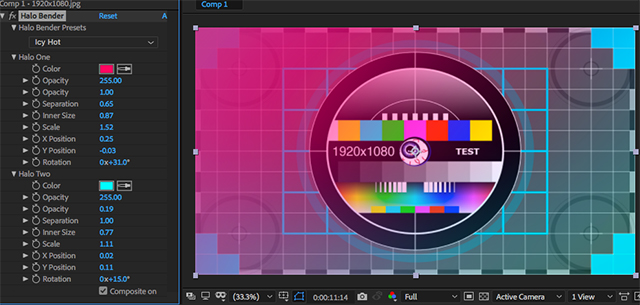

HALO BENDER: Organic Colour Effects

As a collection of keyframe adjustable color washes, leaks, gradients and vignettes – these offer a surprising flexibility for easily generating sophisticated colour looks for existing footage. Offering more than just a set of vintage insta-filters, they’re highly customisable and even the presets generate a range of uncommon and interesting colouring options.

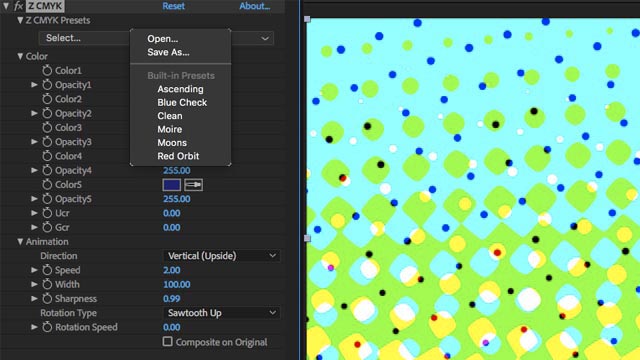

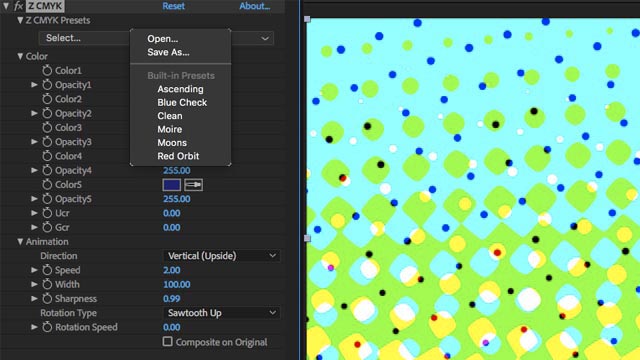

COLR: Animated Generative Colour Backgrounds

The 4 generator instruments: Z Breath, Z CMYK, Z Bars and Z Color Fields offer easy and adjustable options for quick generation of title backgrounds, compositing elements and lighting effects. Minimal, efficient, convenient.

Other plugins available at Zoetroepe:

ZSuite – FCP X only – intriguing looking temporal suite… montage suite, colour suite

Painterly – 4K resolution paintstroke transitions

Requirements:

- After Effects, Premiere, or Final Cut Pro X on OS X.

- Each plugin available at FXFactory, priced between $39 and $49 US.

Verdict:

The Zoetroepe plugins bring some of the fun of real-time visual software – to the demands of the keyframable production timeline. Well worth a spin by anyone looking for timeline convenient ways to explore and experiment with compositing, visual styles and effects.

Examples of Use:

https://www.instagram.com/p/BkeRezlhC_x

https://www.instagram.com/p/BkjCt17HzS9

Interview with Johnny Dekam:

What have you been doing with video since creating VDMX?

I decided to pass the VIDVOX torch to David Lublin when it became clear there were opportunities in large scale project work: concert tours, installations, media festivals, etc. My first big tour was with Sasha & John Digweed & my client list grew steadily over time. Touring as video director or visual content director is something I’ve continued to do until very recently. I’ve also kept very engaged with the art world (which is where my formal training began). Through it all, I’ve continued to create custom software, systems and experiments. I’ve been lucky in that I’ve managed to keep a very interdisciplinary practice over the years.

Was there a fulldome phase in there somewhere?

Indeed there was. My first project when transitioning away from VIDVOX, circa 2004, was to create a digital planetarium for a children’s museum. That’s where I met the Elumenati, who had just started marketing their patented fulldome projection lenses. Within a year I started working for them directly, seeing what kind of real-time software we could create to drive immersive fulldome experiences. It was fascinating work, years ahead of its time in terms of VR. We mounted several live dome projects as well as a few permanent installations. Alas, the work was very niche and difficult to sustain, and concert tours kept calling me. I subsequently left do develop a full show for synth pop icon / renaissance man, Thomas Dolby.

What has interested you about the evolution of live video during that time?

It was a very new idea for concert tours to incorporate realtime visual content. So called media-servers were in their infancy. The electronic music scene was very hip to it all, but outside of this, only a handful of VJ’s had managed to break out into larger concert production industry. It was a very interesting place to be in. I had spent years of my life pioneering my tools with VIDVOX, and now I was able to see the fruits of my labor work in front of massive audiences around the world. The pursuit then became more about the content itself, and advancing the ways in which we could create and manipulate it live. How it could seamlessly integrate with the lighting and set design. It has been fascinating to watch the technology continue to evolve.

I am so proud that VDMX continues to be such a powerful tool, used by so many people, on so many productions.

What lead you into exploring abstract plugins for editing and post production software?

With touring, working for grammy winning acts, television, the whole thing, I found I was traveling nearly most of the year. Then, my wife and I had our daughter, and it quickly became important for me to focus on my family and get off the road.

I struggled a bit to figure out how I could pivot my career. We moved to Boston and I started doing `traditional` video editing and production for the many universities here, such as Harvard and MIT. It dawned on me that I had one true calling that I’ve always loved, and that was video software. FxFactory is based here in Boston, which if you didn’t know, can use Quartz Composer under the hood to create plugins. I love QC so it just made a lot of sense.

I saw a unique opportunity to explore some perennial creative ideas I’ve worked with, but in the plugin space. Most plugins out there are purpose built for utility and saving time. I am more interested in the artistic and generative design possibilities. The downside perhaps is that I’ve curtailed my market by doing so, as they may seem bit idiosyncratic and weird at first glance, but hopefully there are some folks that realize their true potential.

Which of your plugins do you enjoy using the most?

Each one has its own unique appeal. One of my favorite strategies is to use COLR as a source generator, and then apply FLOW or FOLD to play with shape. It is endlessly fun! I’m also proud of GEODE, my equivalent to ‘basic research’ — It is deceptively simple, but ultimately powerful when you explore its potential.

What opportunities exist for 3D software today?

I think there is a lot of interesting things one can do with 3D, but for years I stayed away from it because I found the mechanics so tedious. People who model and animate 3D really have a special temperament, it is not how I like to work, but often find I must. For me I am most interested in live generation and manipulation of the 3D form… to bring the immediacy of raster based instruments like VDMX to the 3D space. I would like to build visual instruments that use 3D vectors as their source.

You’ve mentioned the plugins work better in FCP X rather than the Adobe suite – because they were built using Quartz Composer, which I’ll presume FCP X integrates better. Is QC the reason behind the large number of plugins that are FCP X only?

It’s not because of QC, it is because Apple allowed Motion to be a plugin and template engine for FCPX. Anyone with mograph experience can get their feet wet making plugins, even sell them if they like. FxFactory facilitates a lot of this. PixelFilmStudios is another player that really works almost exclusively with Apple Motion as their dev tool.

As for Adobe CC, part of the magic with the FxFactory ecosystem is that they ‘wrap’ plugins built natively with Quartz into a package that Premiere and After Effects can use directly, which has allowed people like me to make products without needing to work in Xcode, and be able to address both Apple’s and Adobe’s ecosystem with the same source. I think my plugins run ‘better’ in FCPX simply because FCPX is quite simply faster than Adobe. Mind you, this is a MacOSX discussion we are having here.

What are your thoughts about the role and future of QC today – within the Plugin developer community – and for coder artists in general?

People have been saying for years QC is going to die. Yet it hasn’t happened nor has Apple ever indicated they plan to. I see it a bit like QuickTime. It is so fundamental and so many products rely on it that Apple would be shooting itself in the foot to actively kill it. That said, what they are doing is building more tech around Metal and making it easier to code with Swift, at the same time training young ones in Swift from the start. It’s a powerful long term strategy when you think about. Eventually there won’t really be a need for Quartz (in their mind).

There will always be a place for node-based graphical programming, so even if Apple doesn’t release ‘a new Quartz Composer’ I am absolutely certain other tools will be filling the space (as they already are). Vuo, VVVV, FxCore, Max/Jitter, Touch Designer Etc.

Do you have future Zoetroepe plugins in mind?

I’m working on something completely different than plugins right now. I mentioned earlier an interest in realtime 3D instruments… and this is where I’m heading – a return to application software rather than plugins. I hope it will have a broader appeal. I’m particularly interested in the design community, who I think have much to gain by exploring instruments rather timelines and canvases. I also have a keen eye on blockchain tech, but I really can’t say anything else about that right now.

**

See also: reviews of VDMX 5 (2012), VDMX 4 (2003) VDMX 2 (2002) and Grid Pro (2005)

and : VDMX Masterclass with David Lublin at ACMI

by j p, September 25, 2018 0 comments